Why is everyone in AI talking about the end of work? | #304

July 28th, 2025: Greetings from Chiang Mai! Some thoughts on AI this week, something I’ve been stewing on for a while.

Within the last six months, everyone working in AI or leading a company seems to be saying some form of “all the jobs are going to disappear.” And if they aren’t saying that, they are saying that we are headed for some sort of massive disruption. If not that exactly, then anyone not using AI is going to be steamrolled by this new digitally automated economy.

What’s true here? What are these people trying to signal when they make these bold pronouncements?

Let’s start with just a sampling of some of these claims.

Anthropic’s CEO, Dario Amodei seems to be one of the boldest. He told Axios that ”AI could wipe out half of all entry-level white-collar jobs, and spike unemployment to 10-20% in the next one to five years,” adding a warning that, "most of them are unaware that this is about to happen…It sounds crazy, and people just don't believe it." A futures scenario from Scott Alexander and others argues that in 2027, we’ll have models with “the ability to automate most white-collar jobs.” Or as they wrote last week, “we think that sometime in the next 2 - 10 years, AI will enter a recursive self-improvement loop that ends with models capable enough to render all of their ‘well it can’t possibly do this’ calculations moot.”

At the Aspen Ideas Festival, the CEO of Ford said, "Artificial intelligence is going to replace literally half of all white-collar-workers in the US…AI will leave a lot of white-collar people behind.” Jamie Dimon of JP Morgan was less bullish, at least in the short-term, saying AI would enable them to cut workforce by 10%. Amazon CEO Andy Jassy said, “It’s moving faster than almost anything technology has ever seen.” Jensen Huang, head of Nvidia and hustle icon, didn’t go full RIP jobs and qualified the transformation by saying, “It’s a great equalizer…Although everyone’s job will be different as a result of AI, some jobs will be obsolete, but many jobs will be created. The one thing we know for certain is that if you’re not using AI, you’re going to lose your job to someone using AI.”

Sam Altman, head of OpenAI, recently wrote of a gentle singularity that nonetheless will include "very hard parts like whole classes of jobs going away." But fear not, “we will figure out new things to do and new things to want.” In a private conversation with a U.S. Senator, he claimed that 70% of jobs may be eliminated by AI. In follow-up testimony in front of Congress, he claimed to be sounding the alarm so boldly because this time, the pace of change is unprecedented. “Society can adapt to a huge amount of job change, and you can look at the last couple of centuries and see how much that’s happened,” Altman said. But, “I don’t think anyone knows exactly how fast this is going to go. But it feels like it could be pretty fast.”

His plan? Everyone should be using AI so that they can “figure out the new things that they’re going to do.”

What are we supposed to do with this?

Could

Leave lots of people behind

Nobody knows

feels like

Figure out new things…

Even though I’m on a weird path, spending lots of time tinkering and doing random stuff (“figuring out things to do”), and have embraced a more fluid relationship with work, and literally spent time practicing “non-work,” I find all of this vague, uninspiring, and quite frankly, a bit dramatic.

In a conversation with Dwarkesh Patel, researchers from Anthropic made even stronger and more specific claims. Sholto Douglas argues that the current tools are already good enough to do most white-collar labor:

Even if algorithmic progress stalls out and we just never figure out how to keep progress which I don't think is the case. Like that hasn't stalled out yet. It seems to be going great. The current suite of algorithms are sufficient to automate white collar work provided you have enough of the right kinds of data. And in a way that like compared to the TAM of salaries for all of those kinds of work is so like trivially worthwhile.

And his colleague, Trenton Bricken, argues that even with the current technology, it will only take five years to automate.

Even if AI progress totally stalls, you think that the models are really spiky and they don't have general intelligence. It's so economically valuable and sufficiently easy to collect data on all of these different jobs, these white collar job tasks, such that to Sholto's point, we should expect to see them automated within the next five years.

This seemed to be a point when people were getting a little exhausted by claims, claims, claims. In a response to the episode Dwarkesh pushed back against this, though still argued that “If AI progress totally stalls today, I think <25% of white collar employment goes away.”

This is still a big deal! He went on adding some qualifiers:

”without progress in continual learning, I think we will be in a substantially similar position with white collar work - yes, technically AIs might be able to do a lot of subtasks somewhat satisfactorily, but their inability to build up context will make it impossible to have them operate as actual employees at your firm.”

I think a few things are going on here:

First, it’s quite obvious that there is status to be gained for making bold claims while working in the AI Industry. At the CEO level, every time they make a crazy or fear-based claim, it gets tons of press. This raises the alarm and, of course, raises the urgency for more immediate funding, which all these people need. At the younger levels in this field, it’s worth noting that, based on LinkedIn, Sholto, Dwarkesh, and Trenton all graduated in 2020 or later. It seems that in the world of AI, making strong claims about progress is a way of participating in the scene. If I were an early-career researcher, I’d probably publicly participate in the hype too, as it would attract other curious and optimistic people. It’s not fun being a doomer in your twenties.

Second, a lot of weight is being put on this very pivotal “takeoff” moment when the LLMs can start doing the AI research themselves. It is at least somewhat consistent that the people doing AI research think that the LLMs will definitely be better at their own jobs than they will be. But in this acknowledgement, there’s a weird tension in that they are currently doing incredibly complex and challenging work as the highest-paid knowledge workers in the world, while also believing that this is one of the first kinds of jobs that they can fully automate. There are leaps of faith here, and that’s to be expected in such a new field, but I find it a bit unpersuasive that almost every “fast takeoff” scenario from the AI scene involves automating the highest-paid job inside all of the AI labs right now. Maybe I’m missing something here, but there seems to be a lot of hand-waving going on at this specific point in a lot of the projections.

Third, I think a lot of people are mixing up tasks and jobs: If you look at the basket of tasks an accountant did 40 years ago, almost all of the work no longer exists. This is true for many jobs. If you are making claims that many tasks will disappear or be automated, I buy it. I was once a highly paid consultant who would sit on video interviews and take detailed, verbatim notes. This is done 100% by AI now. Now, those consultants are spending more time listening to the conversations, perhaps even participating, and analyzing the reflections. While research from Acemoglu and Restrepo points to the fact that “reinstatement” of new tasks in work that’s automated has slowed in the previous decades, I think I’d need more convincing evidence showing a massive shift in the employment ratio from the elimination of tasks to believe a massive reduction in employment is coming.

Jobs are so much more than a basket of tasks, and I think a lot of people miss this when talking about employment trends. They are a way of transferring wealth to large amounts of people, ways to give people dignity, ways to grant status, vessels for feeling useful, and so much more. I don’t even think we understand how central the job is to modern society, but one way to think about it would be to think about the backlash if all large companies laid off 90% of their workforce. First, it would crush the economy unless there was immediate fiscal stimulus, not to mention the obvious backlash and protests that would ensue. Most companies don’t even push past 10% layoffs (Microsoft laid off about 7% of the workforce in its recent announcements), not to mention widespread firing aversion among the people in charge of hiring decisions at every level of a company. The job, in other words, is not just a bundle of tasks; it is a container that stands in for a complex web of beliefs that undergird society. For this reason, I suspect the job will far outlast most of these predictions, and I’d predict we’ll have an unemployment rate in the US below 10% for the coming decades.

Inside the AI scenius, there have been some more thoughtful takes on this, which feel more grounded.

Dan Shipper’s rebuttal to Trenton and Sholto captured a lot of what I think they are missing about the modern complexity of work (perhaps simply because they haven’t worked in that many jobs!):

If the upshot of this take is that white collar workers are all getting automated I highly doubt that. “If we can get the right data” is actually a HUGE if…there’s a reason why smart college grads take 10 years to become experts in their field… Some data only becomes available for collection once every few years.

A good way to get an intuition for the level of complexity here is to ask a model to predict what people will say next in an internal company meeting. They are all very bad at it—you’ll start to realize just how big the universe of context is and how hard it is to marshall the right context at the right time.

It also makes the mistake of assuming that white collar work is a static target when in fact it’s an extremely fluid, ever changing one.

Also, here is Greg Brockman identifying how much work will need to go into building out the infrastructure for an AI-based economy if it is to emerge:

It's a world where it's just like the AIs are so capable that we all just let them write all the code. Maybe there's a world where you have one AI in the sky. Maybe it's that you actually have a bunch of domain-specific agents that require a bunch of specific work in order to make it happen. I think the evidence has really been shifting towards this menagerie of different models. And I think that's actually really exciting.

So there's actually a lot of power to be had by models that are actually able to use other models. And so I think that that is going to open up just a ton of opportunity because, you know, we're heading to a world where the economy is fundamentally powered by AI. We're not there yet, but you can see it right on the horizon. And the economy is a very big thing. There's a lot of diversity in it. And it's also not static, right? That I think when people think about what AI can do for us, it's very easy to only look at, well, what are we doing now? And how does AI slot in and the percentage of human versus AI, but that's not the point.

The point is how do we get 10x more activity, 10x more economic output, 10x more benefit to everyone, and the barrier to entry will be lower than ever. And so things like healthcare that you can't just, it requires responsibility to go in and think about how to do it right. Things like education where there's multiple stakeholders, the parent, the teacher, the student, each of these requires domain expertise, requires careful thought, requires a lot of work. And so I think that there is going to be just like so much opportunity for people to build. And so I'm just so excited to see everyone in this room because that's the right kind of energy.

What’s the reality?

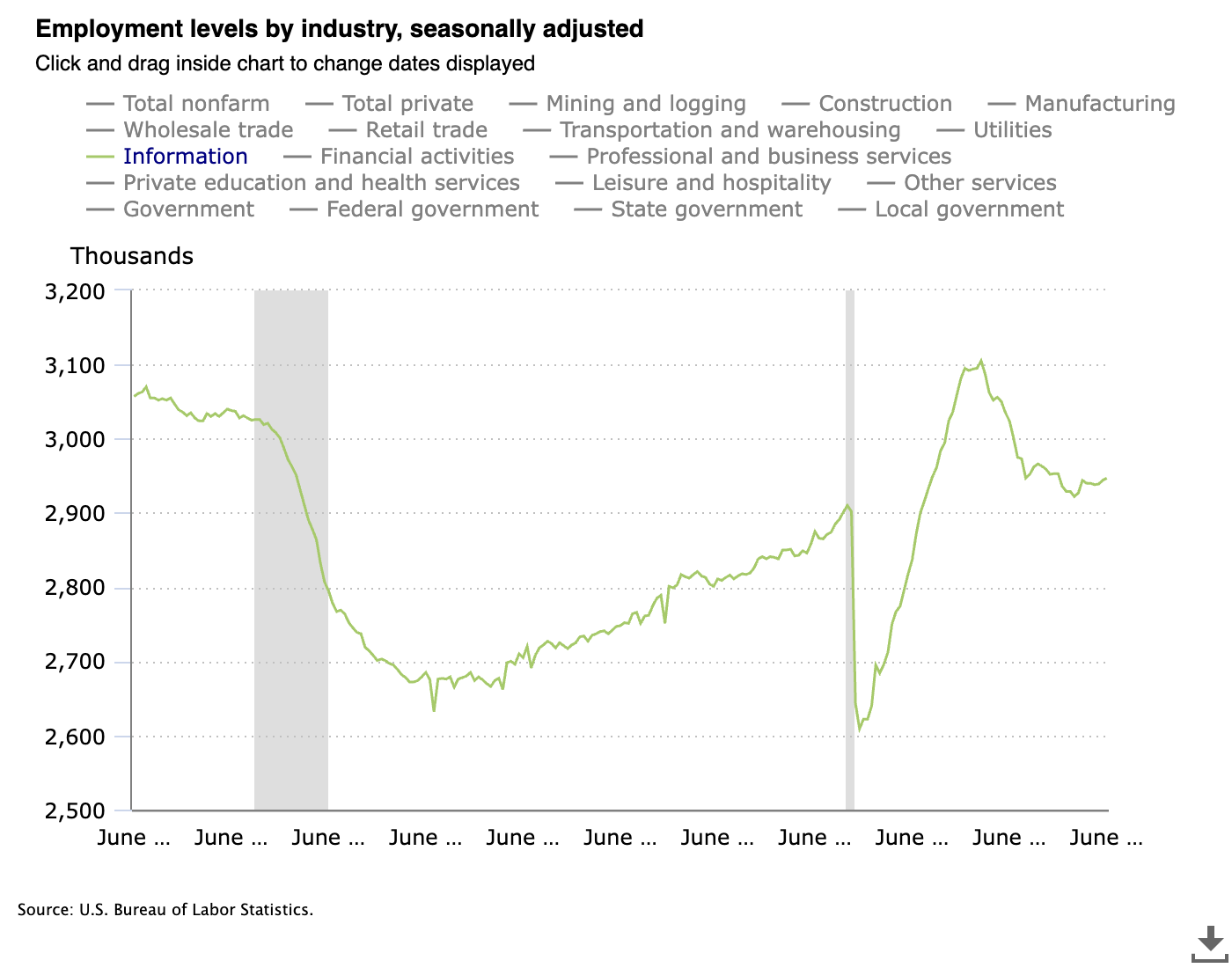

Everyone is a bit jumpy, and any data that points to a dramatic shift in employment gets shared like an old-school chain letter. Last year, there was a chart showing plummeting software engineering job listings, and everyone was ready to call the end of jobs. Here’s a good exploration of why it was misleading, and if you zoom out and look at total employment, you don’t find much plausible evidence of a major change. If you look at “information” sector employment, which includes lots of stuff, you do see some decline in employment, but it seems to be rebounding a bit again.

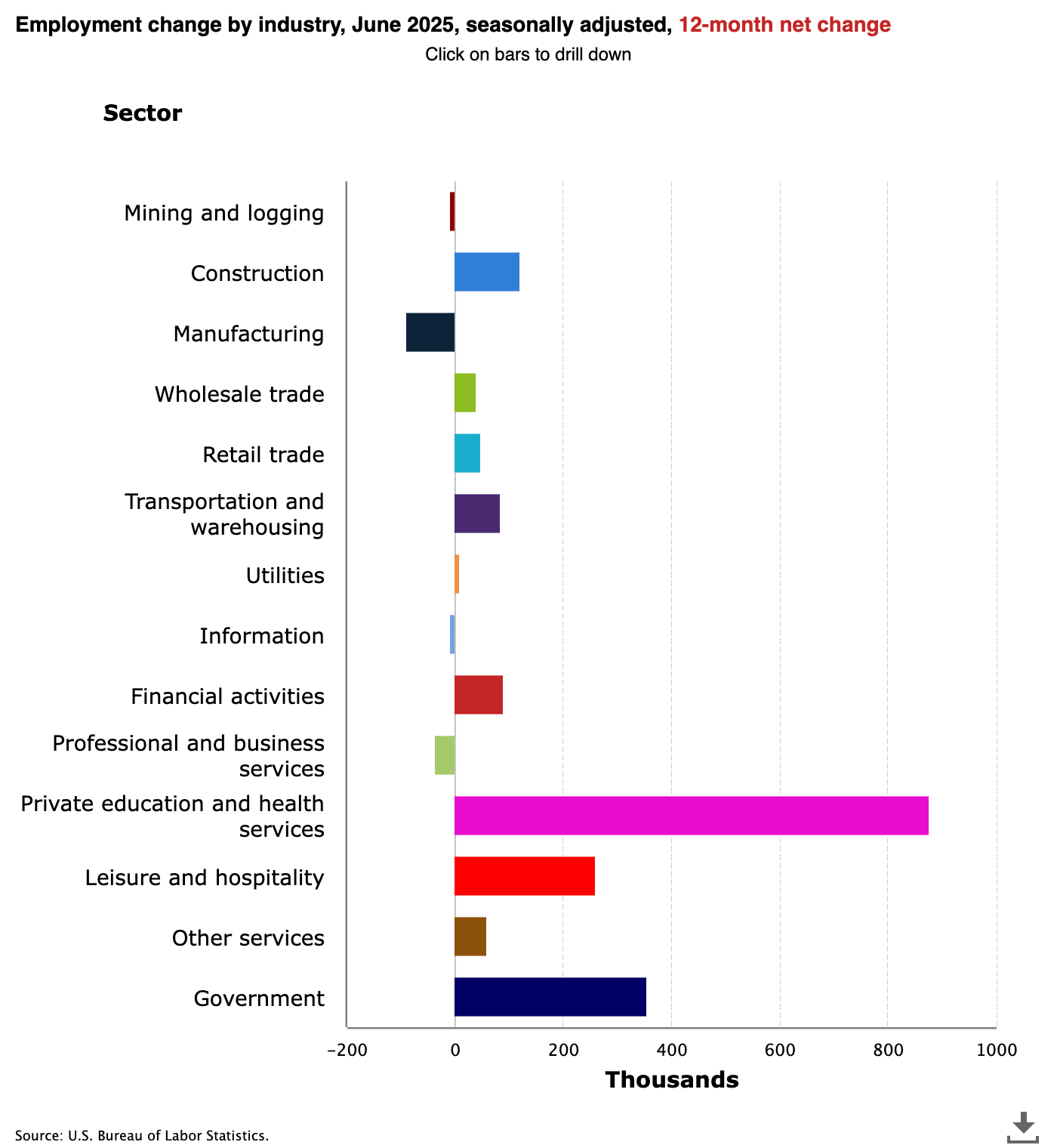

Looking at the last 12 months, we see job growth in most sectors but you do see a small decline in both the business and information sectors. Is this evidence of AI eliminating jobs? Hard to say.

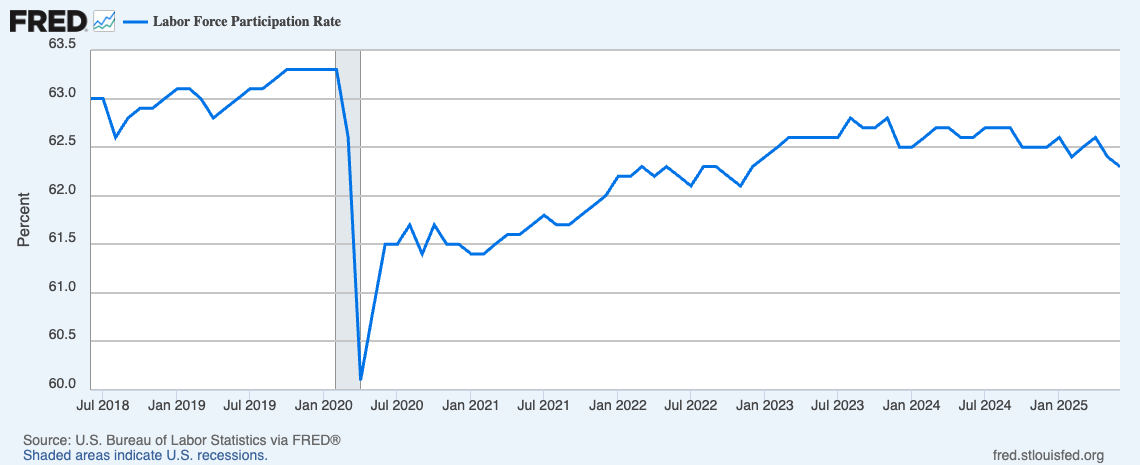

The reality is that employment, at least looking in the US, hasn’t changed much. Looking at the participation rate, we see a slight decline from pre-COVID, but not a major shift in the past few years.

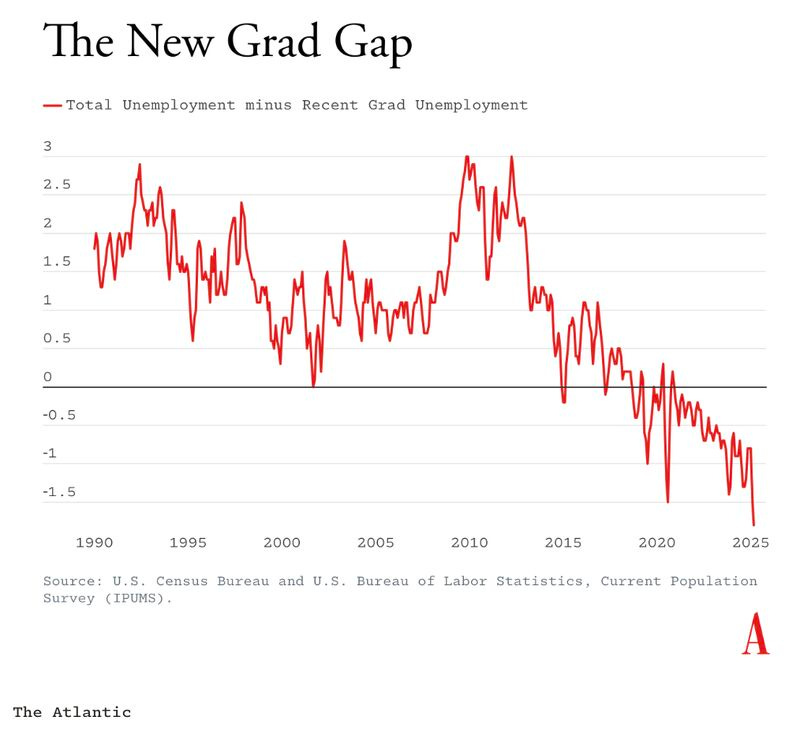

Recently, there was some hubbub about a chart shared by Derek Thompson showing that new hire unemployment was lagging the rest of the economy.

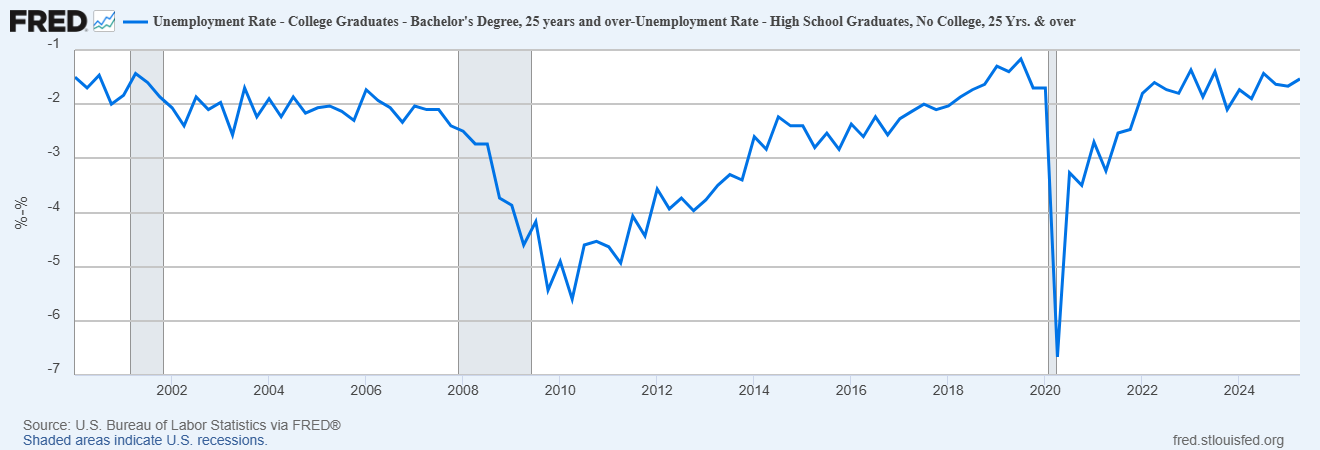

To many, this was proof that AI was already “pulling up the ladder” and that young people’s career opportunities were shrinking. While some of this trend may be true, you can see in the chart that this started far earlier than the appearance of a good-enough LLM. As Noah Smith pointed out, youth unemployment has been in a relatively stable range for the last 20 years:

More Interesting Questions, Back To End of Jobs Sam:

This riff from Sam was in an interview he did in 2023, and it jumped out to me at the time because I think he got to the heart of one of the biggest tensions in our modern relationship with work: we don’t know how much of it and in what form we want:

He pointed to two opposing views.

On one hand, people point to the value of work: “The dignity of work is just such a huge deal…Even people that think they don’t like their jobs, they need them. It’s really important to them and society.”

The other view is that we should never claw back freedom or make people work more, pointing to the people saying, “Can you believe how awful it is that France is trying to raise the retirement age?”

He goes on, “I think we as a society are confused about whether we as a society want to work more or less and certainly about whether most people like their jobs and get value out of their jobs or not.”

He suggested that we need to move to a world where work can be “a broader concept, not something you have to do to be able to eat, but something you do as a creative expression, and find fulfillment and happiness.” I made the case for this broader conception in my second book, Good Work, but I was doing this in a rather unambitious way at an individual level, a script one could adopt and remix. I’m not sure how to scale this, and as far as I can tell, this is not a popular way to think about work, even among those with the resources and freedom to lean in this direction. Put simply, people love jobs, and this makes me think that the job-shaped container and its benefits and predictable salary will be around for much longer than we think. It sounds great for the masses of humanity to be pursuing creative passions, but as far as I can tell, most people don’t want that, most of all, the post-economic AI researchers who already have enough money to do that!

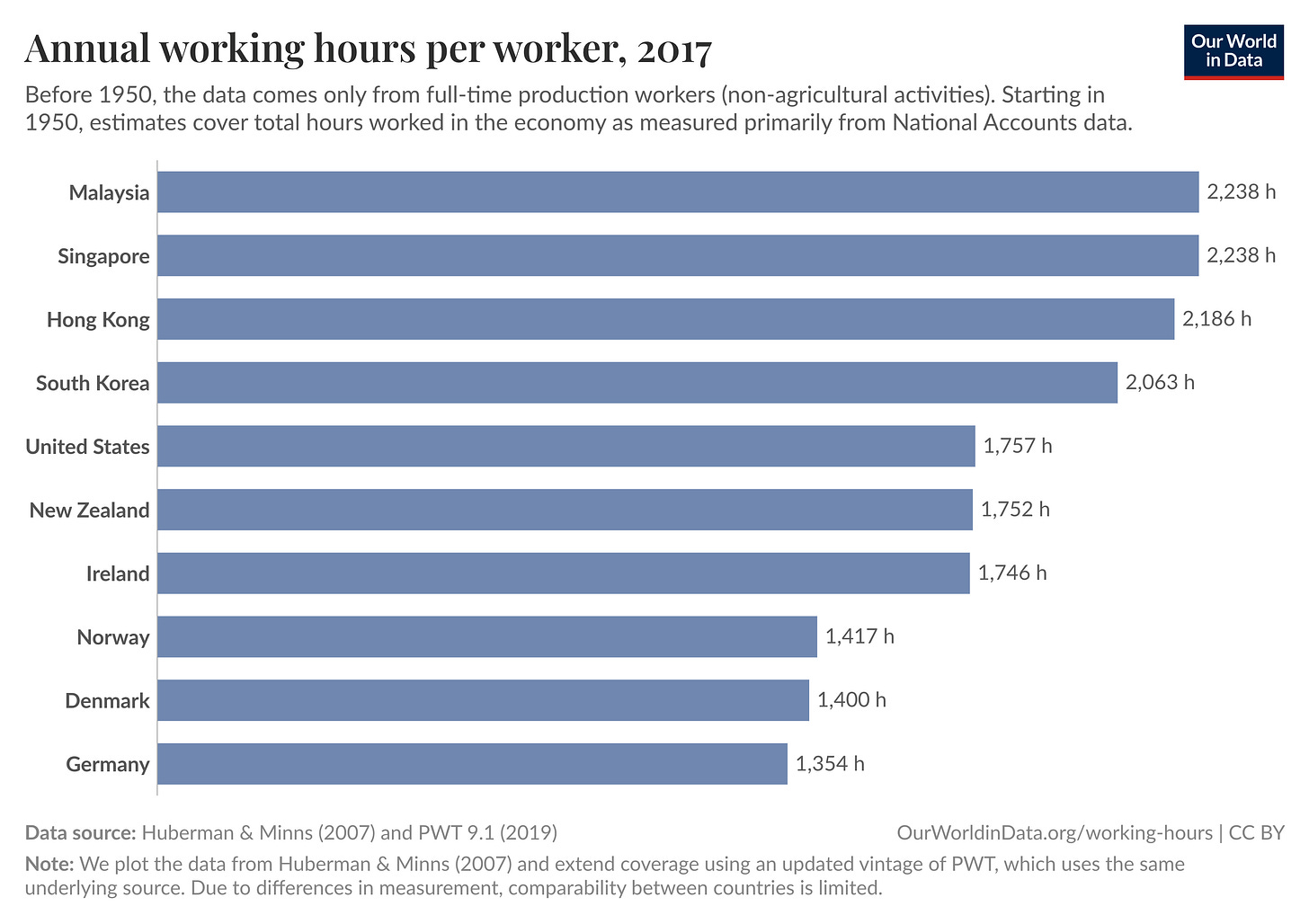

Work and our relationship to it exist in endless numbers of stories about it in our heads, and this varies dramatically around the world. I suspect that these stories shift slowly over generations and only marginally across a median person’s lifetime. Just consider how sticky working hours within a country remain despite our global economy being so tightly linked. We have three different work realities when it comes to how much people work: Asia, the Anglosphere, and Europe.

Where you are born and what your parents think about work probably have more to do with how you think about work than what technology will do in the next 10-20 years.

Of course, I could be wrong about all this.

This post was a way for me to start thinking through this more deeply.

I think one of the biggest points that many people miss is that when you zoom out, some form of doing exists for all people throughout all of history. It is only in the last few hundred years that we started thinking about this through a much narrower lens of formal work or employment. This is attractive because we can track and count things. And if you can track and count it, you can design interventions to “fix” things or get “back” to normal. But this is just our desire for control in a situation that is starting to test our own understanding.

I sense what’s going to happen is not massive job loss due to hyper-efficient capital allocation in the short-term, but instead, a slow and continued reimagination and questioning of our relationship with work. Our beliefs about work are in a subtle dance with the emerging technology all around us. It is that reflexive relationship that will shift our common knowledge about what a job is, what we expect from jobs and work, and how central it will be in an adult life. What direction that goes? I’m not quite sure. But the biggest opportunity is to dare to imagine better possible futures.

Good stuff.

I'd add what a lot of media isn't really emphasizing: Trump.

Trump's actions since assuming office have dramatically increased uncertainty and volatility, especially in financial outlooks, across the world. There is a broad consensus that the tariffs, the bizarre saber-rattling (Canada?!?) and the inconsistency are how you start a recession...from such pinkos as Jamie Dimon, as well as accomplished liberal economists like Brad deLong. (Whose substack is awesome, btw.)

Given *that*, with the promise/threat/big question mark of AI, new initiatives have been put on hold across the board. As a consultant, this is all my space is talking about...repositioning to emphasize critical stuff that increases revenue. Keeping terrible clients. Not raising prices, which means not growing. Most companies seem to be defaulting to the CFO, who is always a voice, of course, but not the driver of the car, and the CFO's default is "No" even in certain times. Similarly, hiring new and inexperienced staff is just a no-go "until this shakes out."

It's easier and safer to blame AI. AI is going to do terrible things to the economy, I have no doubt: the problem is that the "alternative jobs" it creates tend to be too advanced for an easy transition. You could teach a farmer how to stand in front of a conveyor belt and screw a thingamajig into a whatchadoodle thirty times an hour. Teaching a mediocre marketing manager to become a data scientist is a much less viable proposition. But the real answer is everyone's afraid the world financial markets are going to blow up, and there's too much fear around discussing the obvious cause.

I've long believed that the future is small...there's just going to be a lot more of it. Look for more independent pathless path folks using the new tools to do what it used to take a firm of 10 to do.

We're in a bubble. These guys are pumping up the hype because they need the attention to raise more cash and if they don't get the cash, the companies die. AI is not viable in the longer term on the current business model. It's either going to get a lot more expensive or it's going to get a lot more scarce.

Besides, technology adoption is incredibly hard and AI is nowhere near cracking that yet. As sources you refer to have pointed out, there are some massive assumptions in the arguments of the AI boosters.

I think you make an excellent point as well that we don't really understand what it is that AI will replace. Jobs are fluid and dynamic because the people and the relationships that are the context they are in are fluid and dynamic. An org chart is not how the organisation really works. A process diagram is not how the process actually works. If companies use AI to replace what they think their people are doing, it's likely to bring about their demise. We've been here before, remember GIGO, coined in first wave of computerisation?